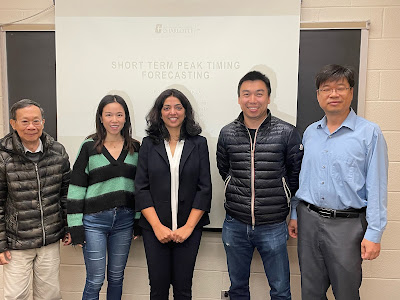

Today (November 3rd, 2023), Shreyashi Shukla defended her doctoral dissertation on short-term peak timing forecasting.

Shreyashi joined our MSEM program in Fall 2017. She completed her master thesis under my supervision in October 2018. After that, she continued working with me to pursue her PhD.

Since the first summer into her PhD program, Shreyashi started working half time at Duke Energy. She took a major role in helping millions of Duke Energy customers understand their electricity end uses. Her managers and colleagues all spoke highly of her work.

COVID hit us right in the middle of her PhD journey. She had to juggle several balls at the same time, the Duke Energy job, her dissertation research, and most importantly, her family. While many others were taking a break during the lockdown, she is making progress on her research.

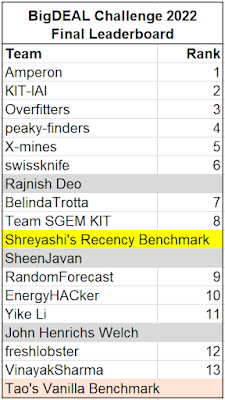

Last year Shreyashi and I organized the BigDEAL Challenge 2022, which attracted 78 teams from 27 countries. She was heavily involved in the competition design. She also took most responsibilities of competition operations.

Her dissertation work is ahead of its time. The committee was very much impressed by the thoroughness and depth of the work. Within the next few years, her name will appear in some of the most influential papers in the load forecasting literature.

Congratulations, Dr. Shukla!